This doc property represents the L2 norm of the document’s vector representation.Īn example of Span.vector_norm property is as follows −ĭoc.vector_norm != doc. The defaults value is an average of the token vectors.Īn example of Span.vector property is as follows −ĭoc = nlp_model("The website is .")Īn another example of Span.vector property is as follows − This Span property represents a real-valued meaning. This Span property yields the tokens that are within the span and the tokens which descend from them.Īn example of Span.subtree property is as follows −

This Span property is used for the tokens that are to the left of the span whose heads are within the span.Īn example of Span.n_lefts property is as follows − rights]Īn example of Span.n_rights property is as follows − This Span property is used for the tokens that are to the right of the span whose heads are within the span.Īn example of Span.rights property is given below − This Span property is used for the tokens that are to the left of the span, whose heads are within the span.Īn example of Span.lefts property is mentioned below − Given below is an example of Span.root property − I, like, new, york, in_, autumn, dot = range(len(doc))Īn another example of Span.root property is as follows − It will take the first token, if there are multiple tokens which are equally high in the tree.Īn example of Span.root property is as follows −ĭoc = nlp_model("I like New York in Autumn.") This Span property will provide the token with the shortest path to the root of the sentence.

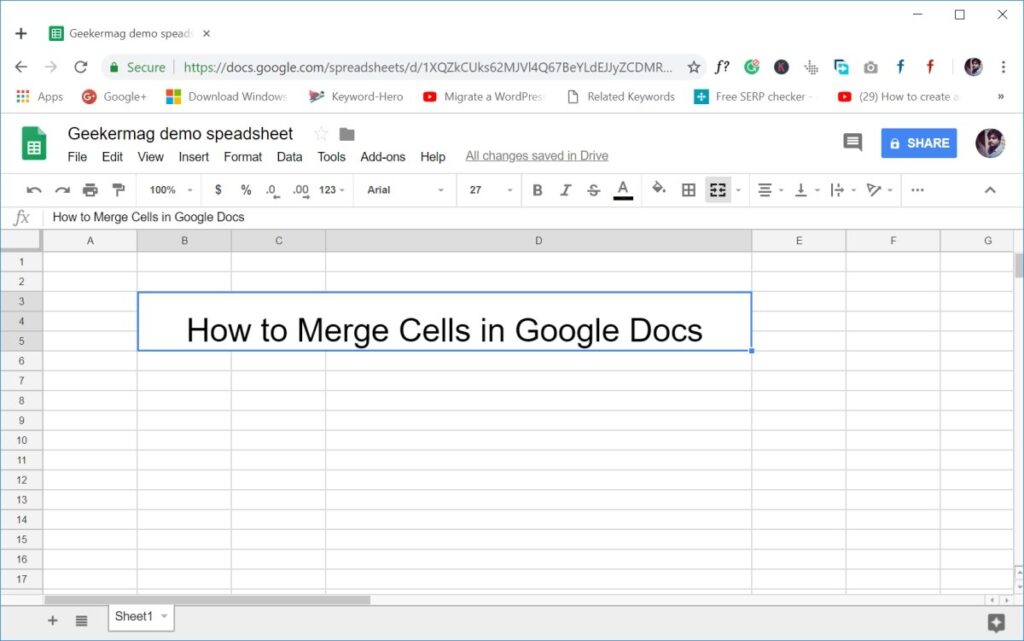

It will have a copy of data too.Īn example of Span.as_doc property is given below − You can also control what data gets saved, and you can merge pallets together for easy map/reduce-style processing. Given below is another example of Span.ents property −Īs the name suggests, this Span property will create a new Doc object corresponding to the Span. The DocBin class makes it easy to serialize and deserialize a collection of Doc objects together, and is much more efficient than calling Doc.tobytes on each individual Doc object. If the entity recogniser has been applied, this property will return a tuple of named entity span objects.Īn example of Span.ents property is as follows −ĭoc = nlp_model("This is .")Īn another example of Span.ents property is as follows − This Span property is used for the named entities in the span. Represents the L2 norm of the document’s vector representation. To yield the tokens that are within the span and the tokens which descend from them. Used for the tokens that are to the right of the span whose heads are within the span. Used for the tokens that are to the left of the span whose heads are within the span. To provide the token with the shortest path to the root of the sentence. Used to create a new Doc object corresponding to the Span. Propertiesįollowing are the properties with regards to Span Class in spaCy. I think is as much space efficient as it can get.In this chapter, let us learn the Span properties in spaCy. # deserialize vocab - common for all docs The following code should work: import spacy Deserialize every doc object in the docs folder and append them to a list.serialize every doc object using the to_disk method into different files in a docs folder.Since you are using the same nlp object, the Vocab can be used across all your different doc objects, so it just needs to be serialized once. in a new processĭoc_bin = DocBin().from_bytes(bytes_data) You can alsoĬontrol what data gets saved, and you can merge pallets together forĭoc_bin = DocBin(attrs=, store_user_data=True) The DocBin class makes it easy to serialize and deserialize aĬollection of Doc objects together, and is much more efficient thanĬalling Doc.to_bytes on each individual Doc object. The Doc.to_array functionality for this, and just serialize the numpyĪrrays – but other times you want a more general way to save and If you’re working with lots of data, you’ll probably need to passĪnalyses between machines, either to use something like Dask or Spark, Return '\n'.join()Īs of Spacy 2.2, the correct answer is to use DocBin. Given the same Vocab object that was used to create them. Writes spaCy Doc objects to a newline-delimited string that can be used to load them later, Is there a preferred way of doing this? I'm looking for something as space-efficient as possible.Ĭurrently I'm doing this, which I'm not very happy with: def serialize_docs(docs): The Doc object has a to_disk method and a to_bytes method, but it's not immediately obvious to me how to save a bunch of documents to the same file. Obviously I don't want to save the Language or Vocab objects more than once (but happy to save/load it once for a collection of Docs). Ideally I'd like to save/load them as spaCy Doc objects. I'm working with very large collections of short texts that I need to annotate and save to disk.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed